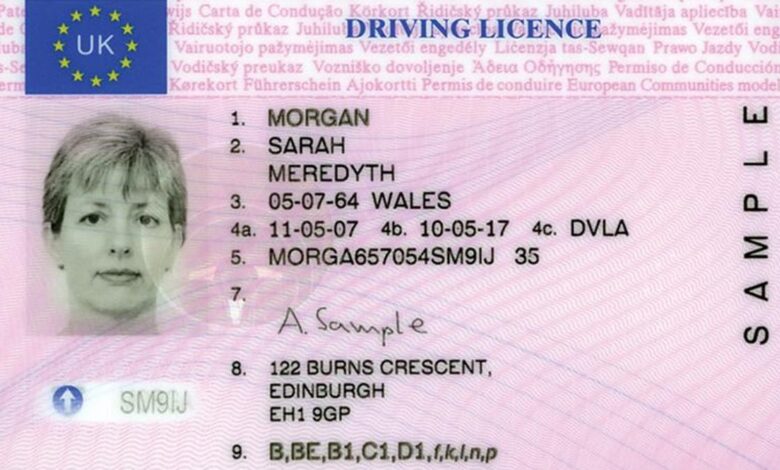

UK Police Use Driver License Photo In Facial Recognition Search

A recent change to a single clause in a UK criminal justice law will soon allow police or the National Crime Agency to run facial recognition searches on a database of 50 million driver’s license holders in the country, in addition to social media images and surveillance images. UK officers have recently taken to using live facial recognition at major public events, including protests, which has brought concerns to the fore regarding privacy, discrimination, freedom of expression and assembly. This significantly larger image database trawling will only exacerbate these concerns.

One opponent of the law, Professor Peter Fussey, a former independent reviewer of the use of facial recognition by law enforcement, claims the program has insufficient oversight, and is concerned about studies indicating the technology is prone to false identification when used against black and Asian faces.

“This constitutes another example of how facial recognition surveillance is becoming extended without clear limits or independent oversight of its use,” states Fussey in correspondence with the Guardian. “The minister highlights how such technologies are useful and convenient. That police find such technologies useful or convenient is not sufficient justification to override the legal human rights protections they are also obliged to uphold.”

Another, Carole McCartney, professor of law and criminal justice at the University of Leicester, expressed concerns that the public had no say in the law change.

“This is another slide down the ‘slippery slope’ of allowing police access to whatever data they so choose–with little or no safeguards,” offered McCartney. “Where is the public debate? How is this legitimate if the public don’t accept the use of the DVLA (Driver and Vehicle Licensing Agency) and passport databases in this way?”

Many U.S. states allow the use of drivers’ licenses in law enforcement facial recognition data pools to varying result. Florida’s system was initially limited to mug shots, but expanded to include anyone travelling through an airport following September 11th. As it expanded to include photos of every driver in the state, it really began “helping” officers identify thousands of suspects. Police claim the error rate is down below 0.3 percent, but these results are based on clear well-lit images and Florida cops are using the software, in some cases, on blurry ATM video taken at night.

A study of a facial recognition program in London found that the software provided 42 potential matches for a specific person throughout the city, though only eight of the matches could be verified as correct. That seems like an extremely poor system to base your legal arguments around. It’s impossible to tell how many false arrests have been made in the U.S. using this type of surveillance software, and perhaps citizens of the UK should be concerned about this.