Google opens its experimental AI chatbot for public testing

Google opened AI test kitchen mobile app to give people some limited hands-on experience with its latest advances in AI, like chat modeling LaMDA.

Google announced the AI Experimental Kitchen in May, along with second version of LaMDA (Language Modeling for Conversational Applications), and is now allowing the public to test parts of what they believe to be the future of human-computer interaction.

Google CEO Sunday Pichai said at the time.

AI Test Kitchen is part of Google’s plan to ensure its technology is developed with some safety rails in mind. Who can supply? join the waiting list for the AI Experimental Kitchen. Initially, it will be offered to small groups in the US. The Android app is available now, while the iOS app will launch “in the next few weeks”.

UNDERSTAND: Data Scientist and Data Engineer: How the Demands for These Roles Are Changing

During registration, users need to agree to a few things, including “I will not include any personal information about myself or others in my interactions with these demos”.

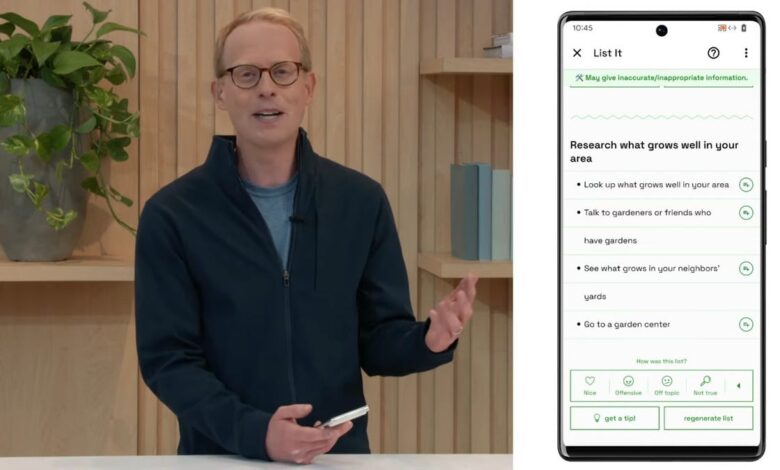

Similar to Meta’s recent public preview of its AI chatbot model, BlenderBot 3, Google also warned that LaMDA’s initial previews “may display inaccurate or inappropriate content.” “. Meta warning like it open BlenderBot 3 to US residents that the chatbot can ‘forget’ that it’s a bot and can “say things we’re not proud of”.

The two companies admit that their AI can sometimes be politically incorrect, like Microsoft’s Tay Chatbot did it in 2016 after the public ate it with nasty inputs. And like Meta, Google says LaMDA has undergone “significant safety improvements” to avoid it giving inaccurate and offensive responses.

But unlike Meta, Google seems to be taking a more restrictive approach, setting boundaries on how the public can communicate with it. So far, Google has only exposed LaMDA to Google employees. Making it public could allow Google to accelerate the rate at which it improves the quality of its answers.

Google is releasing the AI Test Kitchen as a set of demos. First, ‘Imagine it’ lets you name a place, where the AI provides avenues to “explore your imagination”.

The second demo ‘List it’ allows you to ‘share a goal or topic’ which LaMDA then tries to break down into a list of useful subtasks.

The third demo is ‘Talk about it (Dogs edition)’, which seems to be the most liberal-scoped experiment – albeit limited by dog issues: “You can have a nice conversation. looks, open about dogs and only dogsexplore LaMDA’s ability to stay on topic even when you try to get off topic,” said Google.

LaMDA and BlenderBot 3 are pursuing best-in-class performance in language models that emulate computer-human dialogue.

LaMDA is a 137 billion-parameter large language model, while Meta’s BlenderBot 3 is a “175 billion-parameter dialogue model capable of open-domain conversation with internet access and long-term memory”.

Google’s internal tests have focused on improving the safety of AI. Google says it has run adversarial tests to find new flaws in the model and recruited ‘red teams’ – offensive experts who can emphasize the model in a way that the public is not restricted – who who “discovered more malicious, but subtle, results,” according to Tris Warkentin of Google Research and Josh Woodward of Labs at Google.

While Google wants to be safe and avoid having its AI say embarrassing things, Google could also benefit from taking it out into the wild to experience the human voice it can’t predict. . Quite a dilemma. Opposed to a Google engineer questioned whether LaMDA isGoogle highlights some of the limitations that Microsoft’s Tay is subject to when dealing with the public.

“The model can misinterpret the intent behind recognition terms and sometimes fail to generate a response when they are used because it makes it difficult to distinguish between benign and adverse prompts. It can also generate false positives. harmful or malicious feedback is based on biases in its training data, creating Warkentin and Woodward who say responses are biased and misrepresent people based on gender or cultural background. These areas and more are still under active research”.

UNDERSTAND: How I revived three ancient computers with ChromeOS Flex

Google says the protections it’s added so far have made its AI safer, but haven’t eliminated the risk. The protections include filtering out words or phrases that violate government policy, “prohibiting users from knowingly creating sexually explicit; hateful or offensive; violent, dangerous, or illegal content.” ; or disclose personal information.”

Also, users should not expect Google to remove anything said when completing the LaMDA demo.

“I can delete my data while using a particular demo, but when I close the demo, my data is stored in such a way that Google can’t tell who provided it and can no longer make any deletion requests”. consent form stating.